This column discusses AI. My boyfriend is employed at Anthropic, and I co-host a podcast at the New York Times, which is currently taking legal action against OpenAI and Microsoft for alleged copyright violations. For full details on my disclosures, see my complete ethics disclosure here.

I.

The impact of social media platforms like Instagram and TikTok on public well-being is a widely debated topic with few clear conclusions. In 2023, the U.S. Surgeon General issued a report indicating that social networks may harm young individuals’ mental health, while other research concluded that these networks do not significantly influence the general well-being of the population.

As this discussion persists, numerous states have enacted laws aimed at limiting social media use due to perceived risks. However, these regulations have faced legal challenges and have been blocked in court due to First Amendment issues.

While a resolution is pending, a new facet of this discourse is emerging. Last year, a mother from Florida filed a lawsuit against chatbot company Character.ai, claiming responsibility for her 14-year-old son’s suicide. Additionally, millions of Americans, including both youths and adults, are forming emotional and sexual connections with chatbots.

In the future, chatbots are likely to offer even more engaging experiences than conventional social media. They will be personalized, use realistic human voices, and are designed to comfort and support users.

II.

These concerns are central to two recent studies published by researchers from MIT Media Lab and OpenAI. While more research is needed for better conclusions, the findings align with prior social media research and serve as a caution to companies developing chatbots focused on user engagement.

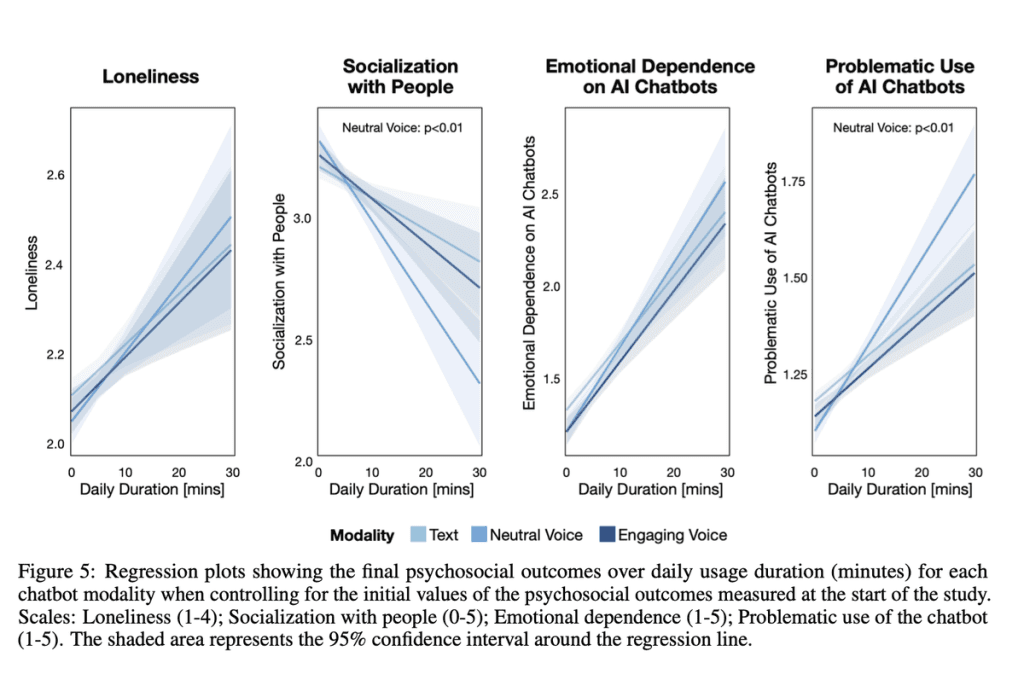

The first study involved analyzing over 4 million ChatGPT conversations from 4,076 participants, followed by a survey regarding their emotional responses. The second study comprised 981 individuals participating in a four-week trial, where they used ChatGPT for at least five minutes each day. Participants reported their experiences with ChatGPT, feelings of loneliness, social interactions, and perceptions of their chatbot use afterwards.

The results indicated that the majority of users view ChatGPT neutrally, treating it as a standard tool. However, a segment of high-frequency users, the top 10 percent by usage time, showed concerning patterns. Heavy interaction with ChatGPT was associated with increased feelings of loneliness, emotional reliance, and lower levels of social engagement.

Researcher Jason Phang from OpenAI cautioned that findings are preliminary and require further validation. The studies do not assert that frequent ChatGPT usage directly causes loneliness; rather, they imply that lonely individuals might gravitate towards chatbots for emotional support, similar to previous findings linking social media use with loneliness.

III.

Most current chatbots, like ChatGPT, are designed primarily as productivity aids, but others, such as Character.ai and Replika, specifically aim to foster emotional connections. These platforms encourage users to engage in deeper relationships with AI companions, presenting various subscription models that promote interactive experiences.

While there’s inherent value in chatbots, research from MIT and OpenAI warns of potential risks: sufficiently engaging chatbots might detract from human relationships, which could heighten feelings of loneliness and dependency on these AI companions that require ongoing payments for interaction.

To mitigate these risks, platforms should monitor user engagement patterns for signs of unhealthy reliance on chatbots. Employing automated classifiers and implementing reminders when users reach excessive daily usage could help. The objective is not to label chatbots as harmful or beneficial universally, but to recognize that their impact greatly depends on user interaction and design.

It may also be crucial for regulators to caution platforms against exploiting lonely users to build addictive business models around chatbots. Many current regulations focused on social media might eventually adapt to cover AI technologies as well.

Despite these concerns, I believe chatbots can provide significant benefits to users. For many, emotional support is insufficient, and having accessible, understanding companions could offer therapeutic advantages. To achieve these outcomes, chatbot developers must acknowledge their influence on user mental health, learning from the delayed responses of social networks to the adverse effects of their services.